Akash Network redefines access to high-performance computing by pooling underutilized GPUs and CPUs into a permissionless marketplace, enabling developers to deploy ML models on DePIN clouds at fractions of centralized costs. At a time when AI training demands skyrocket, Akash delivers NVIDIA H100 GPUs for $2.60 per hour and H200 GPUs for $3.15 per hour, empowering projects from fine-tuning to inference without vendor lock-in.

This pricing, drawn from Akash Supercloud offerings, undercuts traditional providers while scaling to massive clusters like 1024 H100s at $2.00 per hour. Providers earn AKT tokens, fostering a self-sustaining ecosystem where supply meets demand dynamically. As a seasoned observer of DePIN landscapes, I see Akash bridging Render's rendering focus with io. net's ML emphasis, creating versatile DePIN cloud compute for broader applications.

Akash GPU Rentals Outpace Centralized Clouds

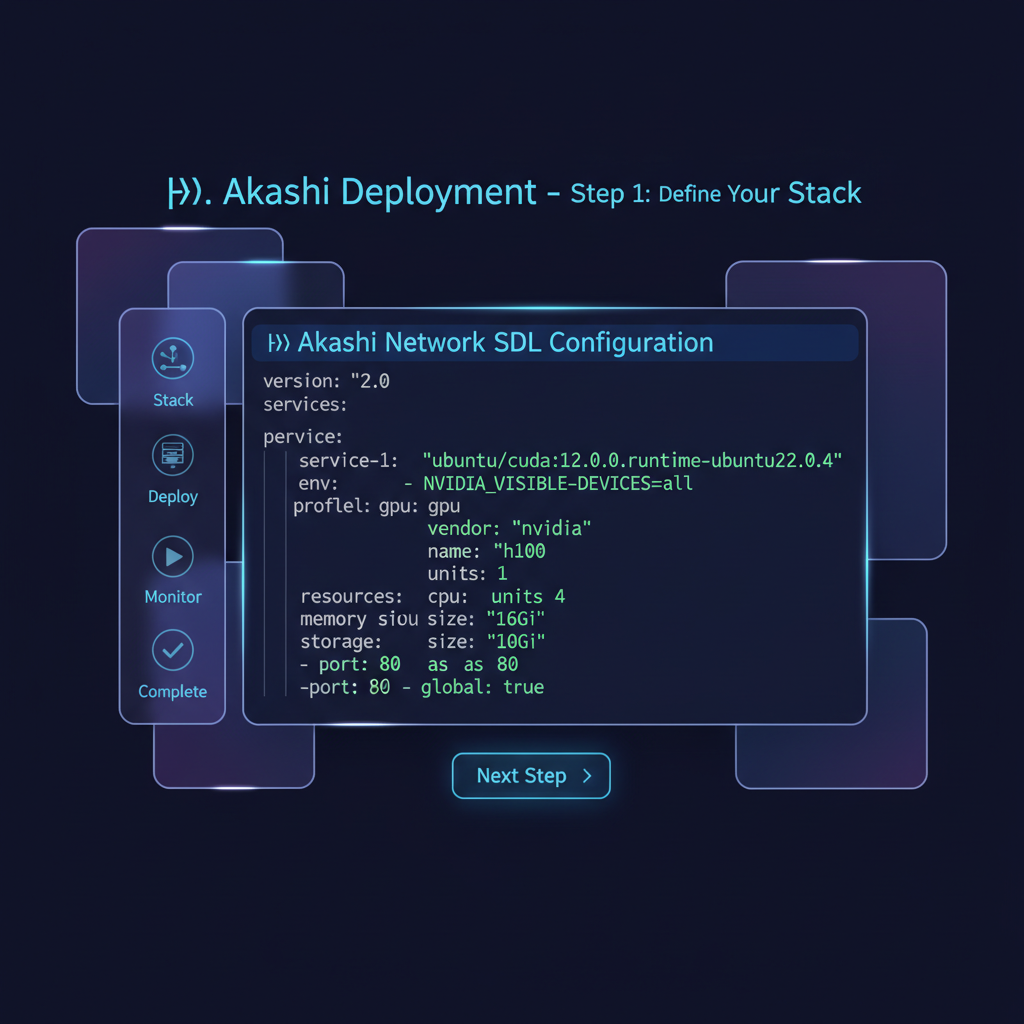

Traditional giants like AWS charge premiums for similar hardware, often exceeding $4 per hour for H100 equivalents amid shortages. Akash's decentralized model sidesteps this through open bidding, where providers compete on price, uptime, and specs. RTX 4090s with 24GB VRAM hover around marketplace lows, but the real draw lies in enterprise-grade H100s at $2.60 per hour. This cost structure lets startups train models that would otherwise strain budgets, democratizing GPU rental DePIN access.

Security bolsters the appeal: deployments run in isolated containers on blockchain-verified nodes, minimizing risks of data leaks or downtime. Scalability shines too; users bid for clusters matching workloads, from single-node inference to distributed training. In my hybrid portfolio approach, Akash represents resilient exposure, balancing AKT's modest $0.3568 price against tangible utility growth.

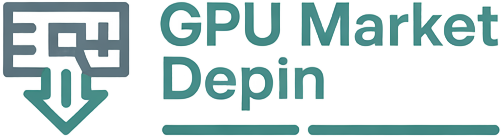

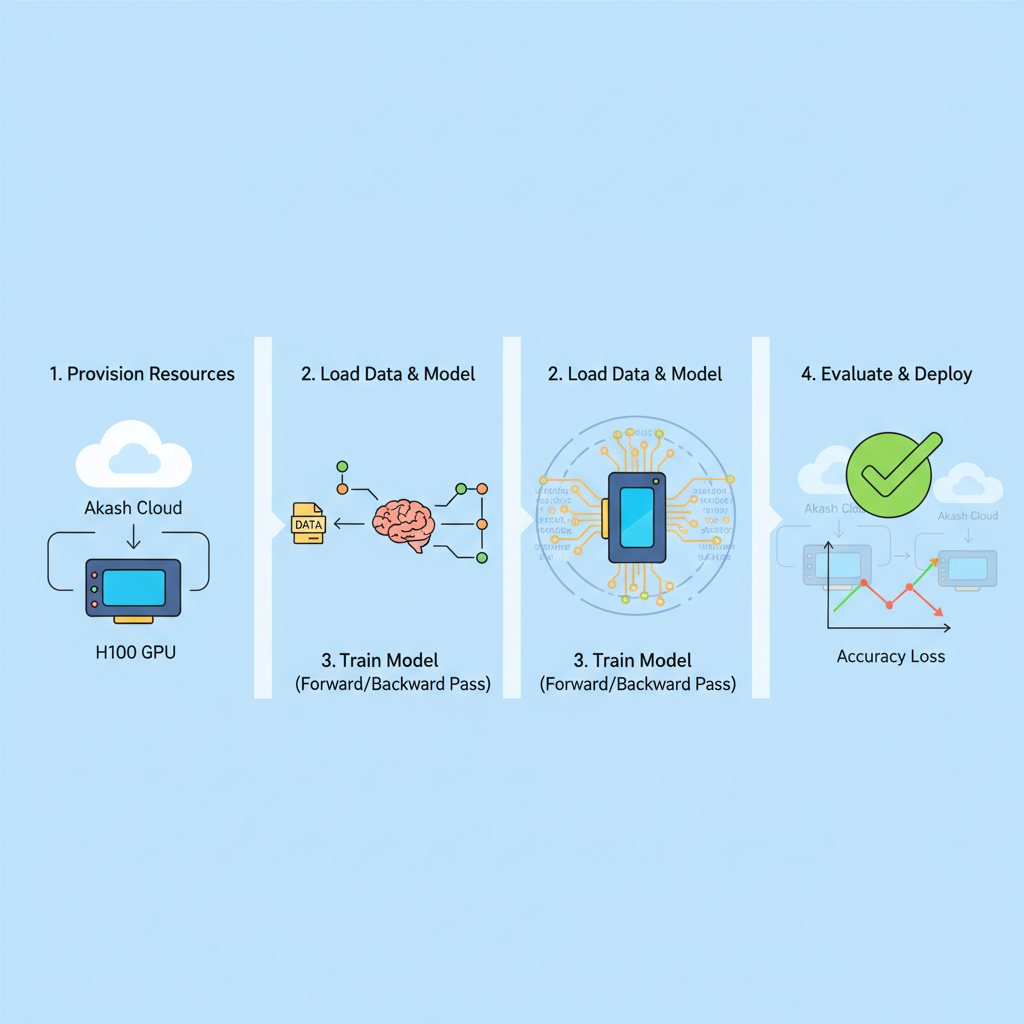

Streamlining ML Model Deployment on Akash

Deploying ML model deployment Akash starts with Stack Definition Language (SDL), a YAML-based blueprint for your containerized app. Define GPU requirements, like an H100 at $2.60 per hour, alongside CPU cores and storage. The Akash console or CLI submits bids to providers, automating provider selection based on cost and performance.

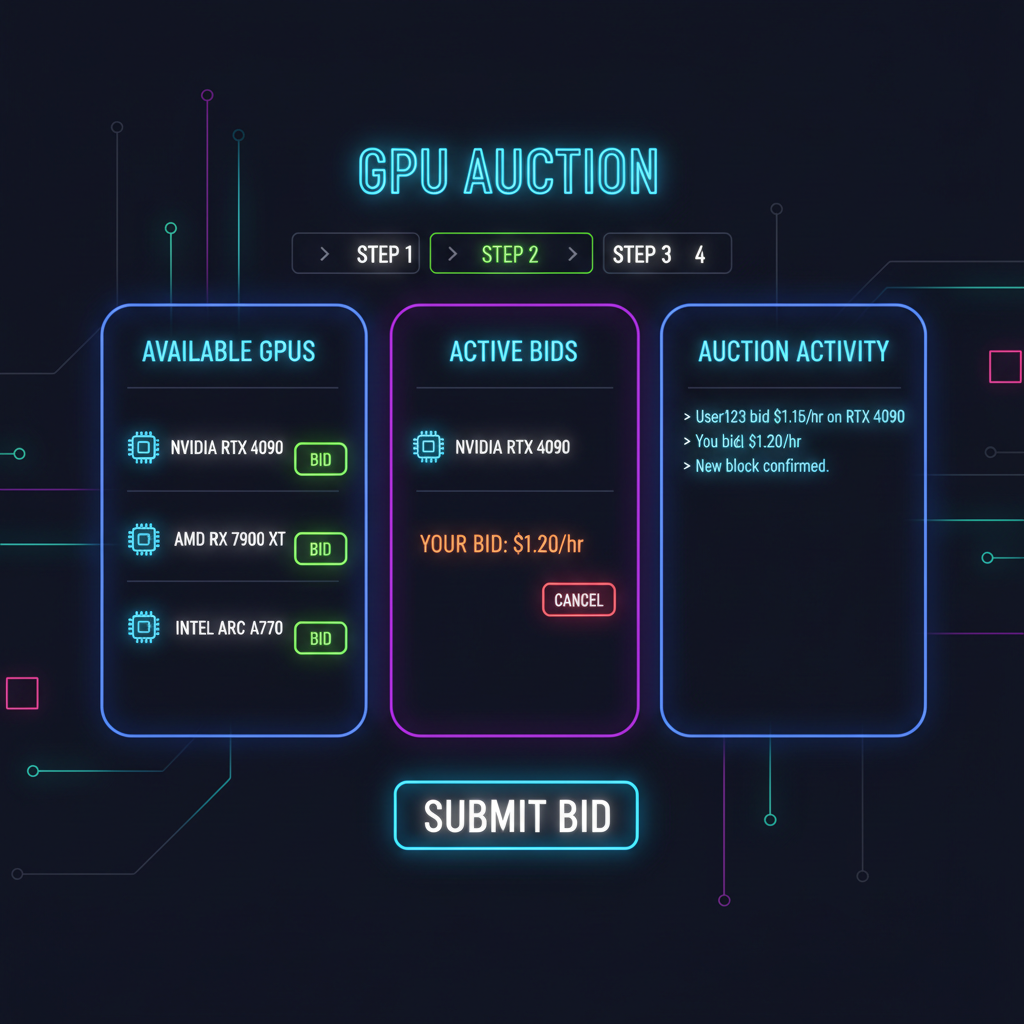

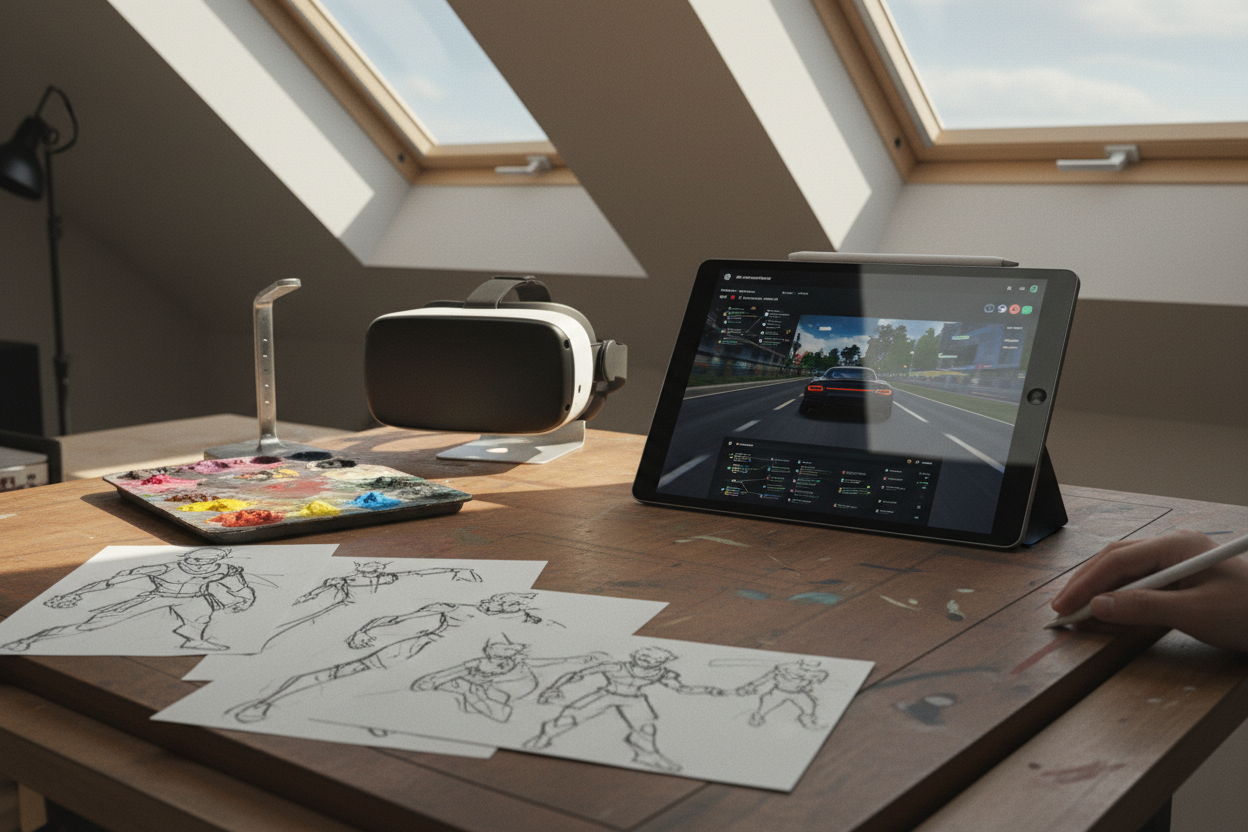

Once matched, Kubernetes-orchestrated deployments launch seamlessly. For instance, fine-tune a Llama model using no-code tools integrated into Akash's marketplace, then expose APIs for inference. Providers handle networking, often with robust bandwidth, addressing Reddit queries on speeds and on-demand nature. This frictionless flow contrasts sharply with decentralized AWS alternative hassles, where provisioning lags.

Akash Network (AKT) Price Prediction 2027-2032

Short-term outlook amid GPU demand surge and DePIN cloud adoption for ML models

| Year | Minimum Price (USD) | Average Price (USD) | Maximum Price (USD) | YoY % Change (Avg from Prev) |

|---|---|---|---|---|

| 2027 | $0.50 | $0.85 | $1.60 | +136% |

| 2028 | $0.90 | $1.50 | $2.80 | +76% |

| 2029 | $1.40 | $2.50 | $4.70 | +67% |

| 2030 | $2.20 | $4.00 | $7.50 | +60% |

| 2031 | $3.30 | $6.00 | $11.20 | +50% |

| 2032 | $5.00 | $9.00 | $16.80 | +50% |

Price Prediction Summary

Akash Network (AKT) is positioned for robust growth due to escalating demand for cost-effective decentralized GPU rentals in AI/ML workloads. From a 2026 baseline of $0.36, average prices are forecasted to climb to $9.00 by 2032, with maximum potentials reaching $16.80 in bullish market cycles driven by DePIN adoption.

Key Factors Affecting Akash Network Price

- Surging GPU demand for AI/ML model training and deployment

- Competitive pricing (e.g., H100 at $1.57-$2.60/hr) vs. centralized clouds

- Expansion of DePIN ecosystem and blockchain scalability improvements

- Crypto market bull cycles and institutional adoption

- Competition from io.net, Render Network, and regulatory uncertainties

- Technological advancements in decentralized compute marketplaces

Disclaimer: Cryptocurrency price predictions are speculative and based on current market analysis. Actual prices may vary significantly due to market volatility, regulatory changes, and other factors. Always do your own research before making investment decisions.

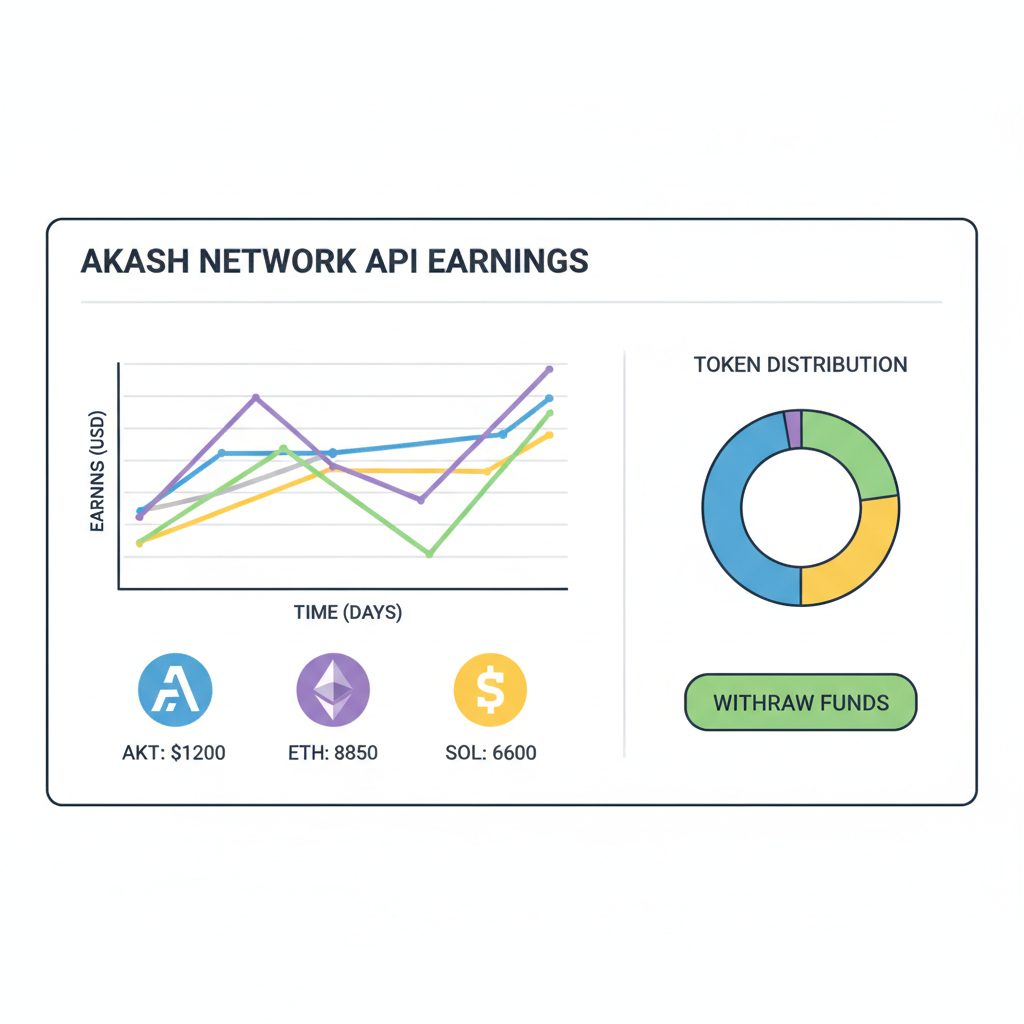

Monetization follows naturally; deploy trained models as services, earning from usage fees in AKT. Akash's evolution from 3D rendering roots to AI powerhouse underscores its adaptability. While io. net hones ML scalability, Akash's public utility ethos ensures CPU-GPU hybrids for diverse workloads, from simulations to edge AI.

Real-World Advantages for AI Builders

Builders report 50-70% savings versus hyperscalers, with deployments in minutes. A tutorial on YouTube demonstrates SDL crafting for GPU networks, revealing straightforward Docker integrations. No overclocking mysteries here; transparent specs cover VRAM, PCIe lanes, and interconnects, vital for stable training.

In portfolios, I weight Akash for its ecosystem depth: AKT at $0.3568 reflects caution post-24h dip, yet GPU utilization trends signal upside. Providers diversify idle rigs, consumers scale unbound, all on a trustless ledger. This synergy positions Akash as the go-to for Akash Network GPU needs in 2026's compute race.

- Permissionless bidding cuts intermediaries

- H100 at $2.60/hr enables accessible fine-tuning

- Containerized security for production ML

- Hybrid CPU-GPU stacks for full-stack apps

These strengths make Akash a cornerstone for DePIN cloud compute, where idle hardware transforms into revenue streams without centralized gatekeepers. Developers sidestep the opacity plaguing some platforms, gaining full visibility into node attestations and lease terms.

Example SDL YAML: Deploying GPU-Accelerated AI Model on Akash Network

The Akash Stack Definition Language (SDL) uses YAML to declaratively define cloud-native deployments. For AI/ML models requiring GPU acceleration, the compute profile specifies the GPU model and units available from DePIN providers. Here's a balanced example for a typical inference service:

```yaml

---

profiles:

compute:

resources:

cpu:

units: 4

memory:

size: 16Gi

gpu:

model: nvidia_a100_80gb_pcie

units: 1

placement:

akash:

pricingClass: {}

deployment:

ai-model:

ai-model:

kubernetes:

spec:

services:

- name: http

port: 8080

protocol: TCP

expose:

- placement:

from: placement/akash

to:

- servicePort: 8080

global: true

containers:

- image: yourregistry/your-ml-inference:latest

command:

- python

- /app/inference_server.py

```This configuration requests a single high-end A100 GPU, suitable for large language models or computer vision tasks. It exposes an HTTP endpoint for model inference. Customize the container image, GPU model (e.g., RTX 4090 for cost savings), and resources based on your workload profiling. Deploy via Akash CLI for seamless integration with the decentralized GPU marketplace.

Hands-On Deployment: A Step-by-Step Blueprint

Picture this: you're scaling a computer vision model that chews through terabytes of image data. On Akash, specify an H100 at $2.60 per hour in your SDL file, pair it with persistent volumes for datasets, and bid across the network. Providers respond in seconds, often undercutting each other to secure the lease. My experience advising compute firms highlights how this beats the weeks-long queues at legacy clouds, especially for bursty AI workloads.

Post-deployment, monitor via the Akash dashboard: metrics on GPU utilization, memory bandwidth, even tenant-specific logs. For H200 rentals at $3.15 per hour, expect even higher throughput on memory-intensive tasks like large language model training. This isn't hype; it's the quiet revolution in GPU rental DePIN, where AKT's $0.3568 valuation belies surging on-chain activity from model deploys.

Akash in the DePIN Arena: Edges Over Rivals

Stack Akash against io. net or Render, and its generalist prowess emerges. io. net drills into ML clusters, but Akash layers CPU rentals atop GPUs for holistic stacks - think inference servers with attached databases. Render excels in 3D rendering, yet Akash absorbs those use cases while expanding to simulations and analytics. In a Medium rundown of 10 decentralized clouds challenging AWS, Akash leads for its mature Kubernetes backing and no-code AI tools.

| GPU Model | VRAM | Akash Price/hr | Edge vs Centralized |

|---|---|---|---|

| H100 | 80GB | $2.60 | 40% cheaper |

| H200 | 141GB | $3.15 | Scales to 1024-node |

| A100 | 80GB | $1.34 (market avg) | On-demand bidding |

| RTX 4090 | 24GB | $0.64 (market avg) | Entry-level access |

This table underscores the value: H100s at $2.60 per hour aren't outliers but norms in Akash Supercloud, with bulk deals dipping to $2.00 for massive arrays. Reddit threads probe these rates' fine print - on-demand, standard clocks, gigabit-plus nets - confirming no hidden catches. Providers thrive too, listing rigs permissionlessly and earning AKT amid network growth.

From a portfolio lens, Akash's hybrid utility tempers volatility. At $0.3568 after a mild 24-hour dip, AKT trades at discounts to peers, yet GPU lease volumes hint at catalysts. I advocate 5-10% allocations here for DePIN exposure, blending decentralized AWS alternative reliability with token accrual from fees.

Future-Proofing AI with Akash's Momentum

Looking ahead, Akash's no-code fine-tuning platform lowers barriers further, letting enthusiasts train and sell models marketplace-style. Integrate with tools like Hugging Face, deploy at $2.60 per hour H100 scale, and tap global demand. Builders I've guided report inference latencies rivaling bare metal, thanks to optimized node fabrics.

Challenges persist - bandwidth variability, provider churn - but blockchain audits and slashing enforce SLAs. As DePIN matures, Akash's public utility roots position it to capture Web3's compute surge, from DeFi oracles to autonomous agents. Providers with RTX 5090s at $1.25 per hour entry points keep the floor competitive, drawing hordes to this ML model deployment Akash haven.

Ultimately, Akash Network GPU rentals aren't just cheaper; they're smarter infrastructure for an AI-driven world, where cost, control, and capability converge on-chain.

No comments yet. Be the first to share your thoughts!